RedWolf Security: On the importance of highly distributed global traffic generation for DDoS testing / simulation

December 7, 2016

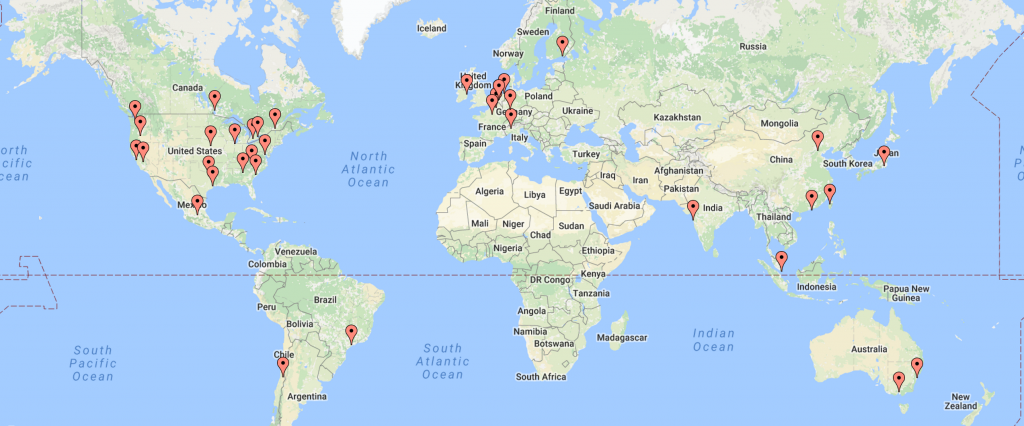

When doing DDoS testing it is important to generate traffic from a many diverse sources as possible. RedWolf Security has the capability to generate global traffic to over 100 data centers in 36 different regions. As of November 2016, all 36 data centers also support IPV6. No other provider of DDoS testing / simulation services has such a footprint, and to pat our own backs, for most of the providers we use RedWolf was the first to negotiate the legal ability to perform high-bandwidth DDoS, and stress/load testing.

The top 10 benefits of highly distributed traffic:

- Realistic: DDoS attacks are almost always quite distributed both geographically and topologically. RedWolf can source traffic from all over the world, on all the major cloud provider networks. RedWolf was the first to figure out a way to legally launch DDoS from these providers – we started over 10 years ago!

- Avoid Link Saturation: Traffic should be allowed to follow many inbound paths so it does not saturate a single ISP’s system. Legal testing should try and avoid causing collateral damage to other ISP clients.

- Determine Link / Routing Preference: Traffic sourcing globally over many different providers and carriers will let you see the ‘route-attractiveness’ of your ISP’s. If you have two ISP’s for redundancy purposes you may think that a DDoS attack will generate fairly equal traffic over each link – this is not the case! The path with the lowest overall cost in terms of routing protocol metrics will get the bulk of the traffic. Sometimes, you’ll also find that some of your upstream ISP’s will rate-limit or black-hole traffic without your permission! Distributed testing lets you see this practice without having to muck-around with pulling BGP route advertisements.

- Calibrate Visibility: Modern network monitoring and attack mitigation systems are quite good at visualizing traffic levels coming in across different geographies and links but it can still be challenging to get all these technologies working properly and calibrated. Tests often uncover systemic problems in the configuration / deployment of traffic measurement / monitoring systems. It is very likely that if even 100 megabit/sec of traffic with a low packet rate were injected from the internet to a network that only 30% of the monitoring systems would accurately measure the traffic. It is important to find out which traffic measurement systems are accurate and timely and which are not.

- Test GSLB: Distributed traffic allows for testing of GSLB DNS, including real-time fail-over. GSLB has a number of weaknesses that can be exploited during a DDoS test as well – contact RedWolf to find out more.

- Characterize Cloud DDoS Scrubbing Center Mitigation: RedWolf has discovered that performance of large cloud DDoS providers often varies between scrubbing centers. RedWolf has observed that DDoS policies can vary across scrubbing centers causing different performance and sometimes attack leakage in scrubbing centers with low, but still substantial DDoS traffic.

- CDN Performance Measurement: Highly distributed traffic allows for testing of CDN affinity. RedWolf records the IP addresses of all CDN edge servers it resolves and can capture the traceroute from each agent to each edge server. Further, web metrics can be captured from all regions with full HTTP HAR file capture showing performance of global CDN’s in real time. RedWolf is often used to test the performance of CDN’s.

- Test Geo Mitigation: Traffic originating from different countries can test geo-based mitigation systems.

- Makes Layer 7 Trickier to Mitigate: Remote locations make layer 7 attacks trickier. The round-trip-time (RTT) of packets limits the overall speed of protocols like HTTP, making remote users seem to be ‘slower’, which can be harder to mitigate. RedWolf often observes more mitigation-system attack leakage from remote attackers vs. close low-latency attackers.